The topic of test management comes up every now and again. Some people might remember the Test Tracking Toolkit from DOORS Classic, some might even have been using it recently. We also have a decent test management tool in Rational Quality Manager (RQM). I have been thinking about the options for a simplified way of managing small amounts of test data all within DOORS Next Generation (DNG).

Because of the underlying differences in the database architecture, just copying the DOORS Classic model is not ideal, so what I have done is to create a model that will allow for relatively painless transition to RQM at a later date, and one which takes advantage of some of the DNG features.

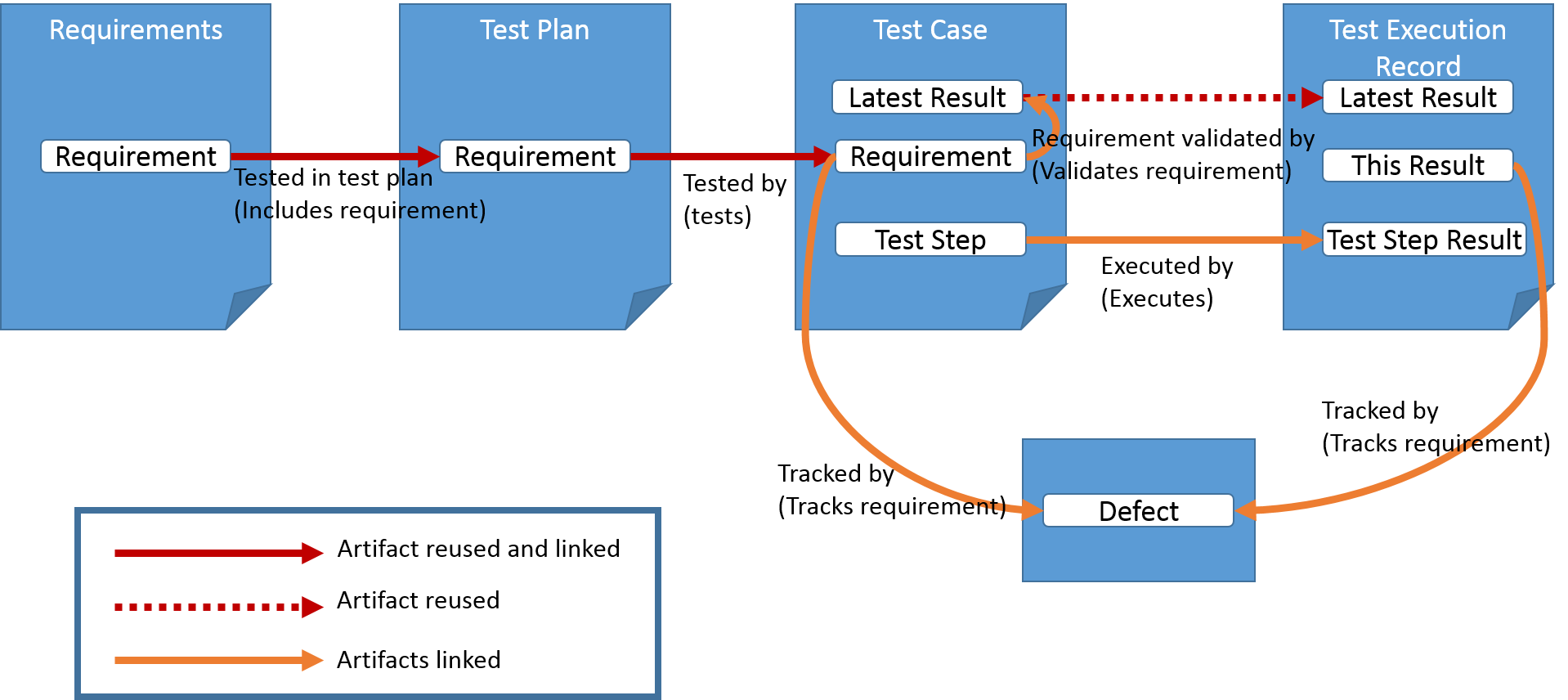

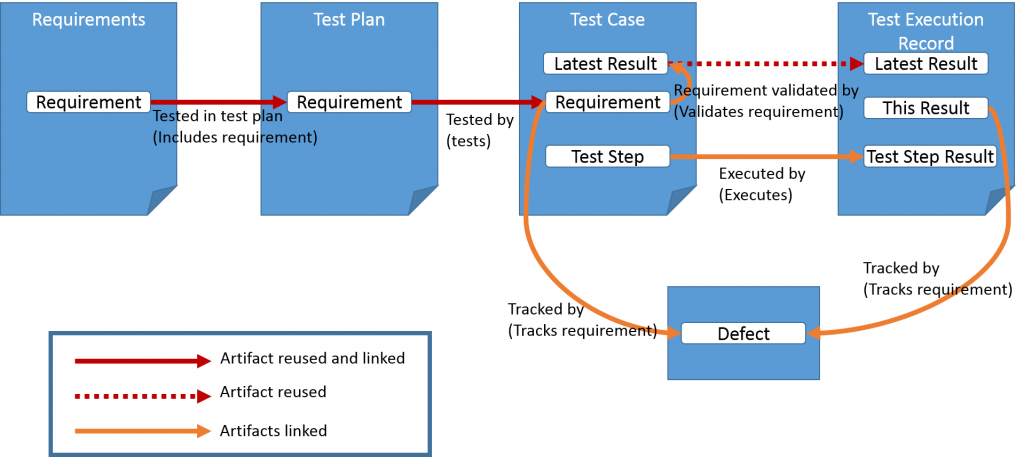

I created a data model which is shown here. I will work through that and describe some of the process that needs to go around it. The details follow on from here.

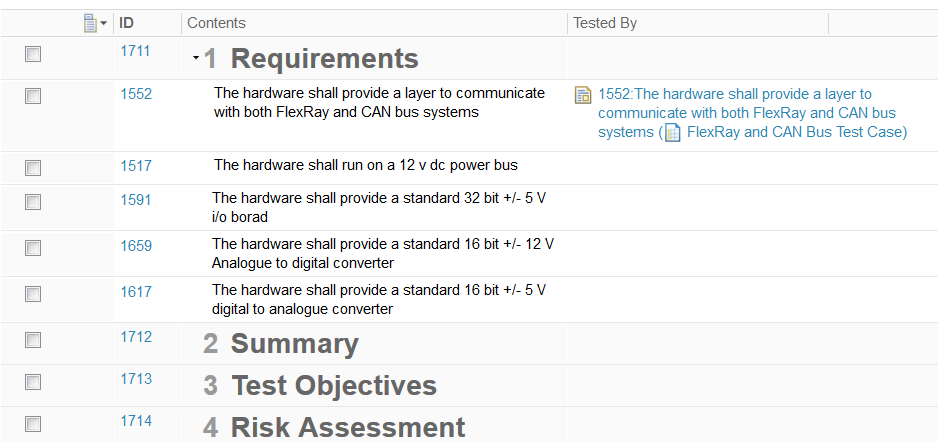

First, there are requirements – after all, we are working in a requirements management tool, so that is a given.

Creating Data

A test plan is created with all the sections necessary, and including a ‘Requirements’ section.

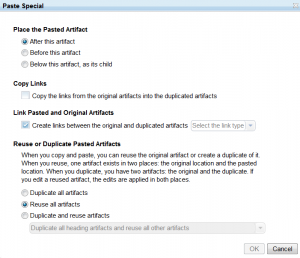

Each requirement, is then reused in a test plan module, and a link is made from the requirement usage in the test plan to the usage in the requirements document. This is made simpler, by selecting the requirements and copying, then using ‘Paste Special’ as shown in the image here, choosing to link pasted and original artifacts and to Reuse all artifacts. The link type needs to be selected from the original, to the pasted (reused) version. This reuse with links gives traceability, but also ensures that when a requirement changes, the latest version is visible in the test plan.

Each requirement, is then reused in a test plan module, and a link is made from the requirement usage in the test plan to the usage in the requirements document. This is made simpler, by selecting the requirements and copying, then using ‘Paste Special’ as shown in the image here, choosing to link pasted and original artifacts and to Reuse all artifacts. The link type needs to be selected from the original, to the pasted (reused) version. This reuse with links gives traceability, but also ensures that when a requirement changes, the latest version is visible in the test plan.

Now a test case module is created. Typically there will be a one to one mapping between test cases and requirements, although the exceptions to this ‘rule’ are probably the majority of cases, but this is a good working assumption as a starting point, and it does keep the model simple.

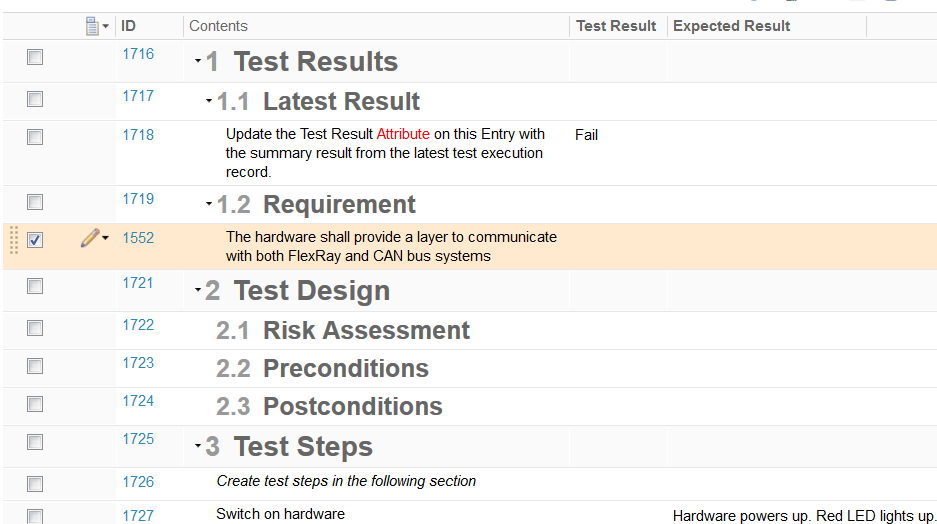

The requirements are again reused in the test cases, and this time linked back to the test plan. The test case also contains a ‘Latest result’ artifact to hold the latest test result for this test case. The Latest result is linked to the requirement in the test case as shown in the data model diagram. I will come back to the Latest result very soon. The test case also consists of test steps with expected results. Any required images will be placed in the default description attribute of the test step, and referred to if necessary from the expected result attribute.

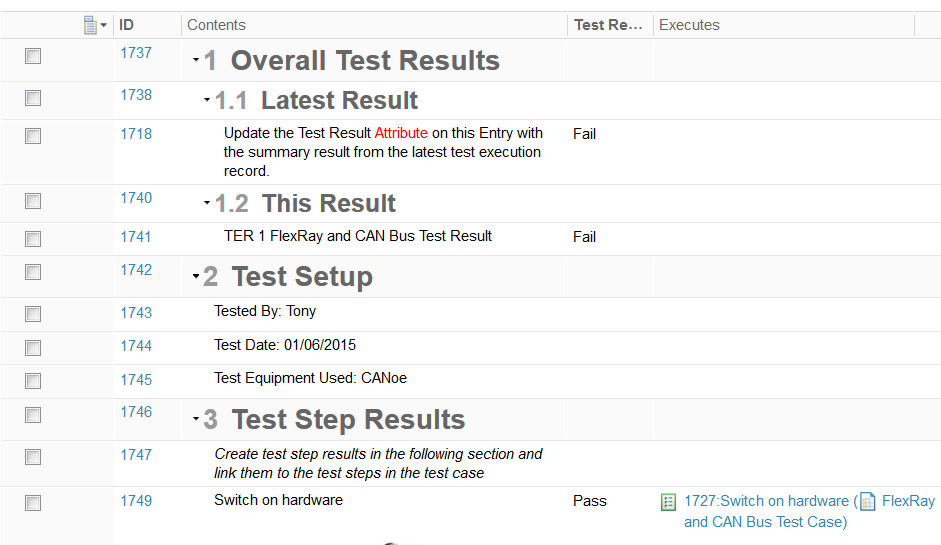

The final piece is the Test Execution Record module. Here, the Latest result is reused from the Test case, but not linked. A new ‘This result’ artifact is added, and a Test Step Result artifact for each Test Step, and these are linked back to the Test Steps 1:1. The easiest way to create these is again with a copy and paste special, but create new artifacts along with the links. The artifact type can then be changed to Test Step Result, and the description content remains the same.

| Description | Artifact Type | Attributes | Expected Values |

|---|---|---|---|

| Latest Result | Test Result | Description | Description of how to use |

| Test Result | Pass, Fail, Not Tested | ||

| This Result | Test Result | Description | Description of how to use |

| Test Result | Pass, Fail, Not Tested | ||

| Test Step | Test Step | Description | Text plus images |

| Expected Result | Text | ||

| Test Step Result | Test Result | Description | Text plus images |

| Test Result | Pass, Fail, Not Tested |

Creating Templates

A Test Plan Module template is the first one to create. This contains all the expected section headings and any relevant boilerplate text.

A Test Case Module template again contains standard sections and boilerplate. Additionally it needs a Latest Result artifact and a placeholder for the requirement(s) so they are not forgotten. Creating a first test step is also helpful, as then following artifacts will default to the test step type.

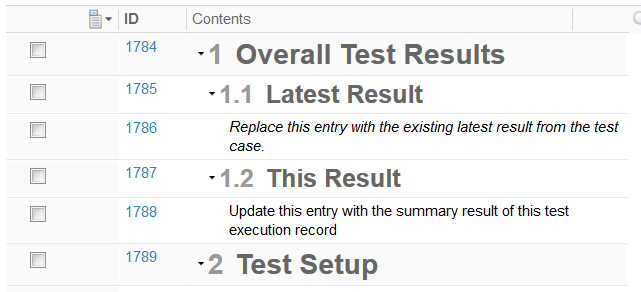

Once test cases have been created from the template, a Test Execution Record template is needed for each test case. This has the reused Latest Result, a This Result, and the appropriate Test Step Results, linked back to the Test Steps. It also needs to contain a place to capture test environment data, such as the date and time of the test, the tester, the equipment in use, and other useful information.

Creating Views

Views bring this model to life, and should be created before the templates so that they can be included as a part of the template.

In the Test Plan, a column to show the related test cases is useful for navigation and gap analysis.

In the Test Case, a column to show the Expected Results is useful, as is a column to show the Status of the Latest Result.

In the Test Execution Record, a column to show the traceability to Test Steps allows the tester to view the expected results through the rich hover capability. A column to show the Test Results is also necessary.

Using the tool

Setting up a project

When setting up the project, the link types and artifact types need to be created, or brought in as a template from elsewhere. What I have suggested is a starting point, not the perfect solution for all situations.

Creating the Module templates follows on from the links and artifact types. These should mirror the current documents in terms of sections and information content, and also contain the specific data described here.

Building test data

Test plans can be created early in a project, even if they are not completed early. Test cases will follow on a little later, but in skeleton form can appear relatively early. Once a test case is written, the Test execution record template can be created for that test case.

Naming conventions and folder structures are important to keep track of the data, particularly as testers will have to create a new Test Execution Record module from the template for every test run.

Running tests

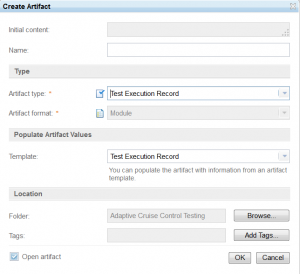

To run a test, first create a Test Execution Record module from the appropriate template.

Add the appropriate Latest Result artifact in to the module at the point identified by the boilerplate text.

Capture the test conditions and run the test, capturing results. When the test is complete, populate both the This Result, and the Latest Result artifacts with the summary result for the test.

Any defects raised resulting from failures should be linked to both This Result in the Test Execution Record, and the Requirement in the Test Case. This will give traceability to the test execution where the failure occurred and the requirement.

Working with the data

Using a purpose built test tool such as RQM will deliver a much greater level of automation, including creation of a Test Execution Record with a single click, and the time saved by using such a tool may well be worth the cost.

This model is similar in structure to RQM, and so will provide an easy migration path from a cultural standpoint. For small scale testing, or testing where each step encompasses significant work, the model described here should provide a feasible way of working.

The reuse and linking described has been thought through to give sufficient navigation and reporting capability to make this a useable model. JavaScript extensions are possible to enhance this model, but I would caution against significant investment in building automation capability that can be easily bought in another tool.

Comments

3 responses to “Managing test data in DOORS Next Generation”

While it is true that I am not a doors user, I know a darn good helpful post when I read one. 🙂 Nice job, Hazel.

Thank you Maureen, I hope it will be useful, although I feared it might be a little incomprehensible

Hello Hazel, any more recent updates to the convergence/implementation across the different architectures you identified from DOORS Classic to RQM and DNG? Thank you for your blog/ideas! Ted